Introduction

Today I want to talk about how to perform Search Engine Optimization (SEO) for your website. In this article, I am going to share some simple actions that I did for this website (https://mincong.io). It includes the following items:

- Add robots.txt for web robots

- Submit a sitemap file

- Use structured data (schema markup)

- Optimize title tags

- Fix URL errors

- Understand what keywords users are searching

- Use SEO analyzer

Now, let’s get started!

Add robots.txt

Web site owners use the /robots.txt file to give instructions about their

site to web robots; this is called The Robots Exclusion Protocol. A sample

file looks like:

User-agent: *

Disallow: /secret.html

which means allow all robots complete access, except the secret.html file.

Notice that robots can ignore your /robots.txt. Especially malware robots

that scan the web for security vulnerabilities, and email address harvesters

used by spammers will pay no attention. For more information, visit

http://www.robotstxt.org or Google Search: Robots.txt Specifications.

There are tools like https://www.websiteplanet.com/webtools/robots-txt/ which

allows you to analyze your robots.txt file and see if there are any errors or

warnings for free.

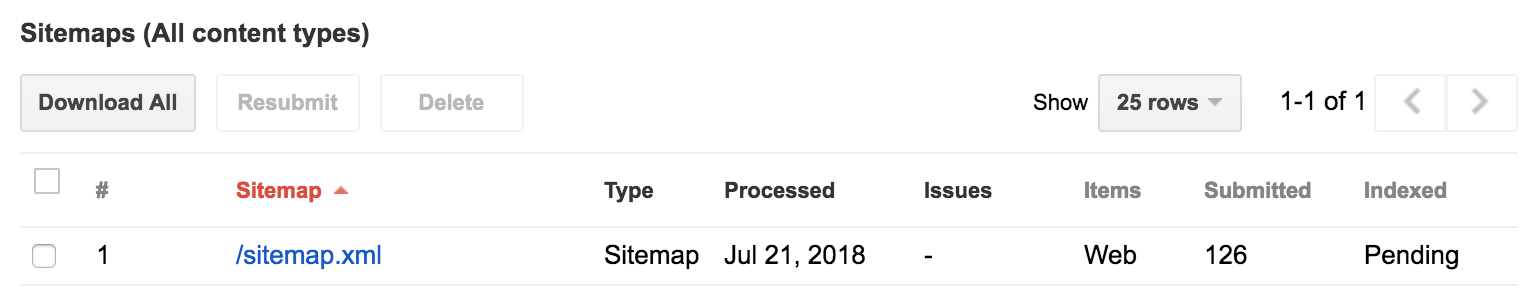

Submit a Sitemap File

This helps Google better understand how to crawl your site. Google introduced the Sitemaps protocol so web developers can publish lists of links from across their sites. The basic premise is that some sites have a large number of dynamic pages that are only available through the use of forms and user entries. The Sitemap files contains URLs to these pages so that web crawlers can find them. Bing, Google, Yahoo and Ask now jointly support the Sitemaps protocol.

I’m using Jekyll Plugin jekyll-sitemap to generate the sitemap. The generated result looks like:

<urlset xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xmlns="http://www.sitemaps.org/schemas/sitemap/0.9" xsi:schemaLocation="http://www.sitemaps.org/schemas/sitemap/0.9 http://www.sitemaps.org/schemas/sitemap/0.9/sitemap.xsd">

<url>

<loc>https://mincong-h.github.io/2018/05/04/git-and-http/</loc>

<lastmod>2018-05-04T15:31:59+00:00</lastmod>

</url>

</urlset>Once generated, the result is available at https://mincong-h.github.io/sitemap.xml. Test and submit this result to Google Search Console.

You can also provide the sitemap information in robots.txt file, so that the

sitemap can be found by all the web crawlers (Google, Bing, Yahoo!, Baidu…):

sitemap: https://mincong-h.github.io/sitemap.xml

Fore more advanced configuration about Sitemaps, visit Sitemaps - Wikipedia.

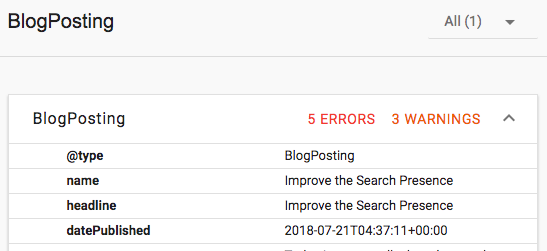

Use Structured Data (Schema Markup)

Structured data helps Google understand the content on your site, which can be used to display rich snippets in search results. Structured data is a standardized format for providing information about a page and classifying the page content.

You can use Rich Results Test - Google Search Console to test an existing page by giving their URLs, fix the errors and warnings based on Google’s feedback:

You can use Google Data Highlighter to highlight the data. Or you can add schema programmatically in your source code. I think the most interesting way is to start by using Google Data Highlighter. Once you understand the essentials, switch to DIY schema filling. And then continuously improve each field and stay tight with https://schema.org.

- Use Google Highlighter to understand the basics

- Embed structured data in your website pages

- Continuously improve your structured data using https://schema.org

Optimize Title Tags

Use descriptive, unique keywords. Title tags have withstood the test of time. They’re still a big part of how your site will perform. Make sure that every one of your title tags is descriptive, unique, and catered to your targeted keywords. Avoid using the same keywords and title tags over and over. This way, you’ll diversify your opportunities while avoiding cannibalizing your own efforts.

6 tips from Neil Patel’s blog for title tag optimization:

- Use pipes (

|) and dashes (–) between terms to maximize your real estate. - Avoid ALL CAPS titles. They’re just obnoxious.

- Never keep default title tags like “Product Page” or “Home.” They trigger Google into thinking you have duplicate content, and they’re also not very convincing to users who are looking for specific information.

- Put the most important and unique keywords first.

- Don’t overstuff your keywords. Google increasingly values relevant, contextual, and natural strings over mechanical or forced keyword phrases.

- Put your potential visitors before Google – title tags can make-or-break traffic and conversions.

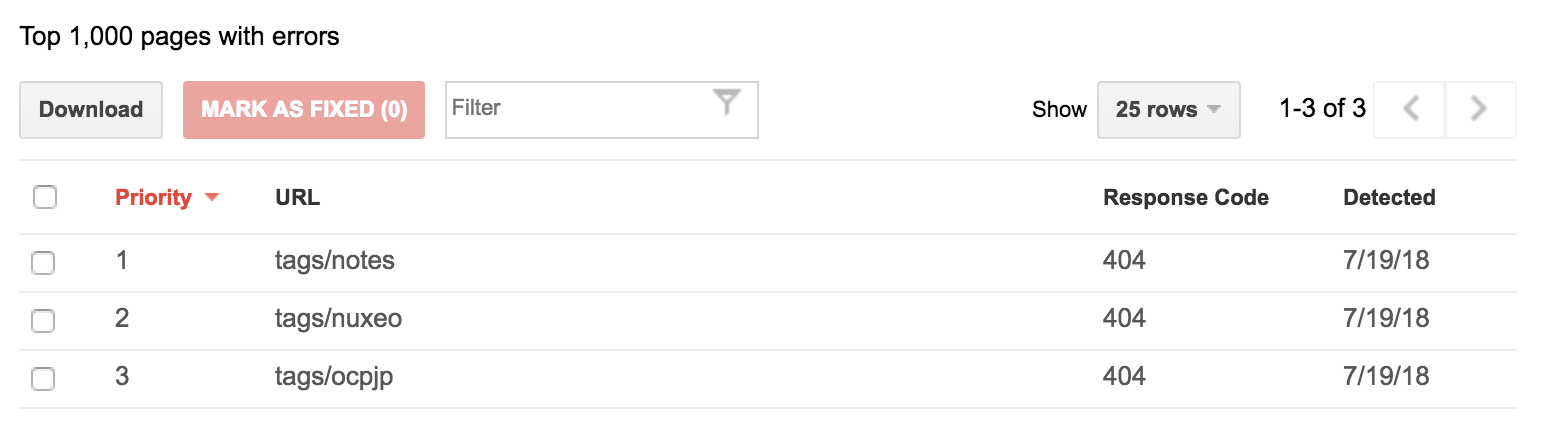

Fix URL Errors

Web crawlers can show you which URLs are broken:

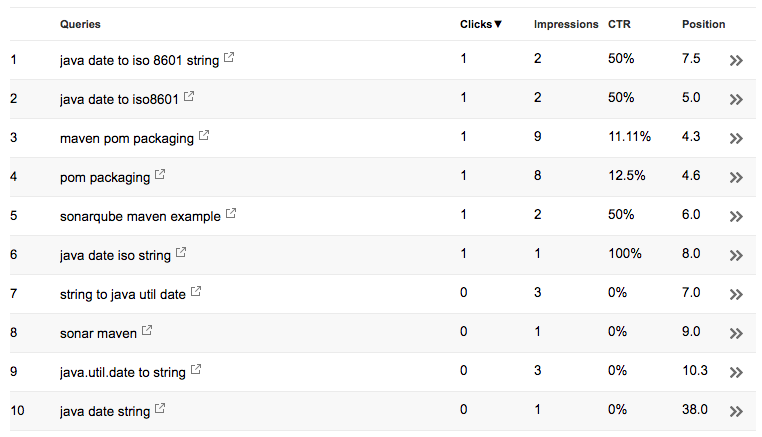

Understand User Queries

Once you’ve configured the sitemap and structured data, you can see the Search Analytics on Google Search Console - Search Analytics. For example, the summary of your website:

- Total clicks. How many times a user clicked through to your site. How this is counted can depend on the type of search result type.

- Total impressions. How many times a user saw a link to your site in search results. This is calculated differently for images and other search result types.

- Average CTR. It is the percentage of impressions that resulted in a click.

- Average position. It is the average position in search results for you site.

You can also see the search queries used:

Once you’ve understood the user queries, you can improve the post title and post description accordingly.

Use SEO Analyzer

Use online SEO Analyzers to analyze and get suggestions for your website. I used https://neilpatel.com, but you can use others as you wish. An SEO analyzer helps you to know:

- Website level SEO analysis (errors, warnings, and advices)

- Page level SEO analysis (errors, warnings, and advices)

References

- GitHub: jekyll-sitemap

- Google: Build and submit a sitemap

- Google: Introduction to Structured Data

- Google Search: Robots.txt Specifications

- Google Search: Introduction

- Moz: Using Google Tag Manager to Dynamically Generate Schema/JSON-LD Tags

- Webmasters: Blog and BlogPosting for Google

- Wikipedia: Sitemaps

- The Web Robots Pages

- Schema.org: Full Hierarchy

- Neil Patel: The Step-by-Step Guide to Improving Your Google Rankings Without Getting Penalized